SFA200X2/SFA400X2

SFA200X2/SFA400X2

DDN Storage Fusion Architecture® (SFA®) appliances are purpose-built to deliver scalable flash performance and capacity to flexibly meet your changing business demands.

The SFA NVMe platforms deliver 100% NVMe storage with multiple high-speed connectivity options. At up to 90GB/s, the 2U SFA NVMe-only/Hybrid are the fastest storage solutions in the industry and are able to deliver up to 24 NVMe SSDs in a minimum form factor. Built with 3rd Gen Intel® Xeon® Scalable processors, this extreme level of performance density makes the SFA NVMe platforms ideal for data centers with limited space and requiring rock-solid high-performance flash in a scale-out architecture. Start with a single enclosure and scale limitlessly to meet file system or block requirements. Hybrid configurations scale up to 24 NVMe devices plus 900 SAS devices (spinning disk, or SSD).

BLINDING PERFORMANCE THAT SCALES ON DEMAND

Whether you are accelerating an analytics workload, reducing latencies for tough NoSQL databases, or beginning a Deep Learning project with modest training sets, the SFA NVMe platforms are ideal as a cost-effective building blocks. Designed to help you get the most out of your investment, the platform has an internal switch fabric with up to 288 lanes of PCle Gen 4, plus up to 64 lanes providing client connectivity. SFA400NVX2 can add 16x x4 ports (64 lanes) of SAS-4 connectivity, providing hybrid expansion capability.

SMALL, YET POWERFUL FLEXIBILITY

SFA NVMe platforms are available as block storage appliances and integrated high-performance file appliances. DDN’s EXAScaler® file system enable the SFA NVMe storage building block model to scale out the parallel file name space with maximal efficiency. Built, deployed, and supported by the experts in data intensive workloads, these appliances deliver the ultimate application and workload performance by fusing the advantages of DDN’s SFAOS with the industry leading EXAScaler® parallel file system.

WORKLOAD ACCELERATING NVME STORAGE

COMMON USE CASES

The flexibility and capabilities of the SFA NVMe platforms lend themselves to a wide variety of applications:

- Artificial Intelligence

- Analytics

- Deep Learning

- Content Distribution

- High IOPs Telemetry

Al400X3

Blazing Fast Performance for Al and Beyond

The Al400X3 sets a new benchmark for NVMe data platforms, with enhanced performance and supporting up to 24 dual-port NVMe drives. Powered by the DDN EXAScaler® parallel file system and integrated with NVIDIA® BlueField-3 SuperNIC'” and other NVIDIA technologies, the Al400X3 delivers unmatched speed and efficiency for Al, HPC, and enterprise-scale data workloads.

EFFORTLESS MANAGEMENT AT SCALE

Simplify operations at any scale with EXAScaler’s intuitive multi-tenancy and robust monitoring tools. Built for efficiency and ease of use, EXAScaler® ensures consistent, reliable performance across demanding deployments-giving you the visibility and confidence to manage infrastructure effortlessly.

COMPRESSION THAT ACCELERATES

Maximize storage efficiency with client-side compression that slashes data sizes without slowing you down. Unlike competitors that sacrifice performance, DDN’s platform keeps your data moving fast, even when compression is fully engagedideal for demanding workloads.

BUil T FOR TOMORROW WITH SCALABILITY

Designed to evolve with your needs, the Al400X3 supports scalability with NVMe-oF™ expansion coming soon. Whether you’re increasing capacity or enhancing Al capabilities, this platform ensures you’re prepared for the data demands of the future.

SUPERCHARGE WORKLOADS WITH DON’S NEXT GENERATION DATA PLATFORM

COMMON USE CASES

The Al400X3 platform offers the flexibility and capabilities needed to support a wide variety

of applications:

- Artificial Intelligence

- Analytics

- Deep Learning

- Generative Al

- Machine Learning

- HPC

Scalable Infrastructure for End-to-End AI

The DDN AI2000 is purpose-built to provide unmatched efficiency, industry leading performance, and

enterprise-grade reliability to support modern AI workflows and applications.

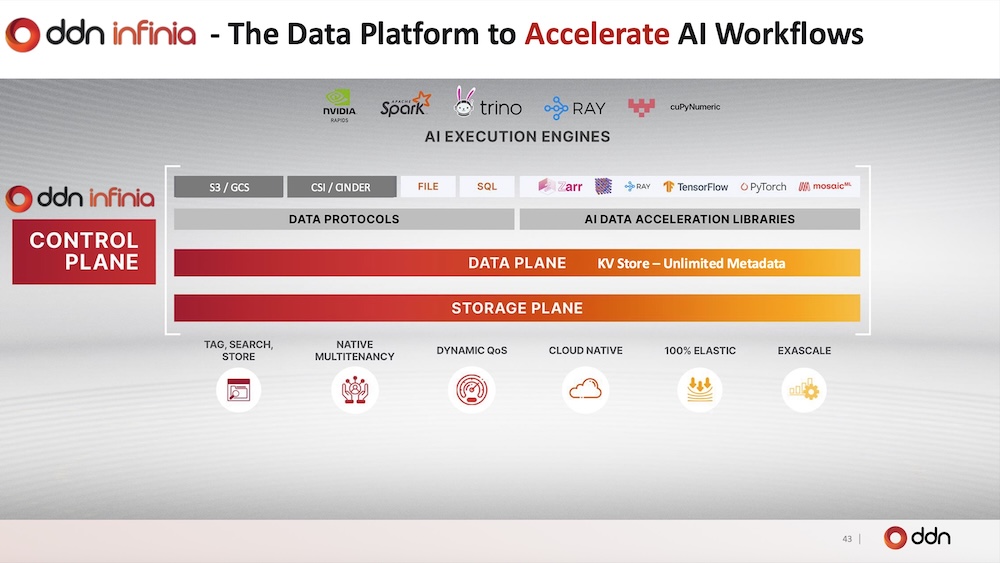

Powered by the DDN Infinia Platform, the AI2000 is engineered to accelerate AI inference, data analytics, model preparation, and loading. The DDN AI2000 seamlessly integrates into AI data pipelines, delivering ultra-low latency and effortless scalability, ensuring enterprises extract maximum intelligence from their data while minimizing complexity and cost.

REDUCE COMPLEXITY

Delivers a unified global view of distributed datasets, enabling end-to-end AI across core, cloud, and edge. By breaking down data silos, it streamlines AI data preparation, model loading, analytics, and inference for more reliable insight.

ACCELERATE INNOVATION

Optimizes every AI workload with low-latency performance. Its advanced metadata engine minimizes data movement, accelerating inference with RAG-enabled indexing. Keeps GPUs fully utilized for maximum efficiency.

CUT COSTS

Lowers CAPEX by up to 75% with reduced power, cooling, and space requirements. Leverages metadata for search and in-place tagging to achieve 10x data reduction, minimizing unnecessary data movement.

SCALE RELIABLY & SECURELY

Simplifies governance and control with built-in reliability and security. Fault tolerance, encryption, and multi-tenancy ensures continuous availability and protection of critical AI data at any scale.

MOVE BEYOND ARTIFICIAL WITH THE DDN DATA INTELLIGENCE PLATFORM

PURPOSE-BUILT FOR END-TO-END AI

The DDN AI2000 powered by the Infinia Platform unifies data across your organization with unmatched scalability, simplicity, and flexibility, enabling you to drive AI innovation and success for:

- AI Inference

- Data Analytics

- Model Preparation

- Model Loading

About DDN

DataDirect Networks (DDN) is the world’s leading big data storage supplier to data-intensive, global organizations. DDN has designed, developed, deployed, and optimized systems, software, and solutions that enable enterprises, service providers, research facilities, and government agencies to generate more value and to accelerate time to insight from their data and information, on premise and in the cloud.